A question enters your private AI. Here's what really happens.

A sovereign, private AI dedicated to your organization never shared, never pooled.But a private AI isn't enough. An LLM with RAG only does 15% of the work. The remaining 85% determines whether the answer is reliable, sourced and defensible in front of an auditor. Here are the 5 architectural layers that make the difference all running exclusively on your data, within your own instance.

Step 1

Sovereignty & Isolation

Your instance. Your model. Your rules.

Each customer has their own isolated instance. No information sharing, no context leakage between tenants. Your documents are never used to train third-party models.

Frontier → sovereign distillation. Knowledge from frontier models is transferred to compact DSLMs (3—7B parameters) that can be hosted on-premise. Frontier quality, sovereign hosting, controlled costs.

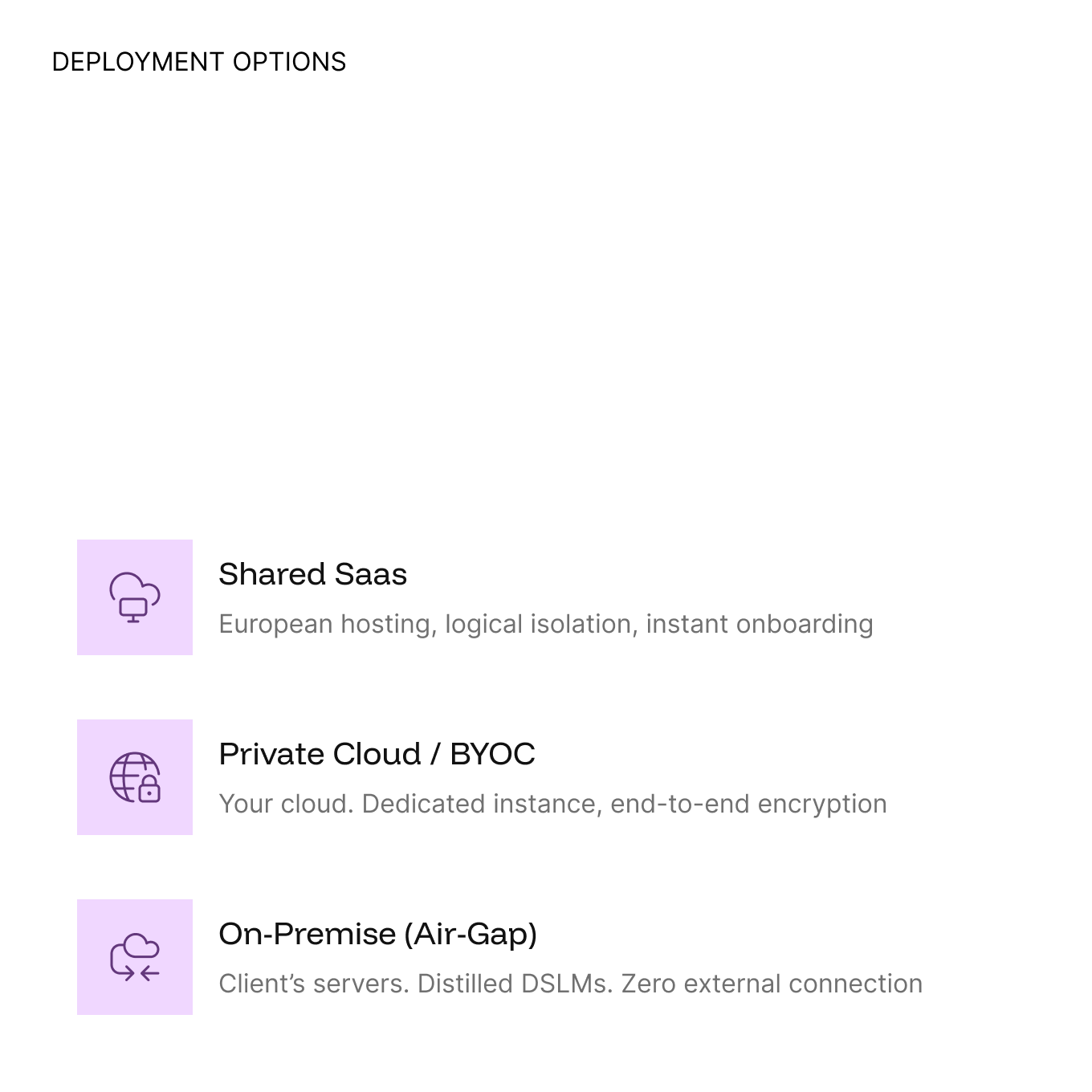

Zero-Knowledge architecture. 3 deployment modes : Shared SaaS, Private Cloud/BYOC, On-Premise (air-gap compatible). You choose. You are changing. Your data stays where you want it to be.

Step 2

Multi-Agent Orchestration

89 specialized agents. In parallel. Not a single LLM.

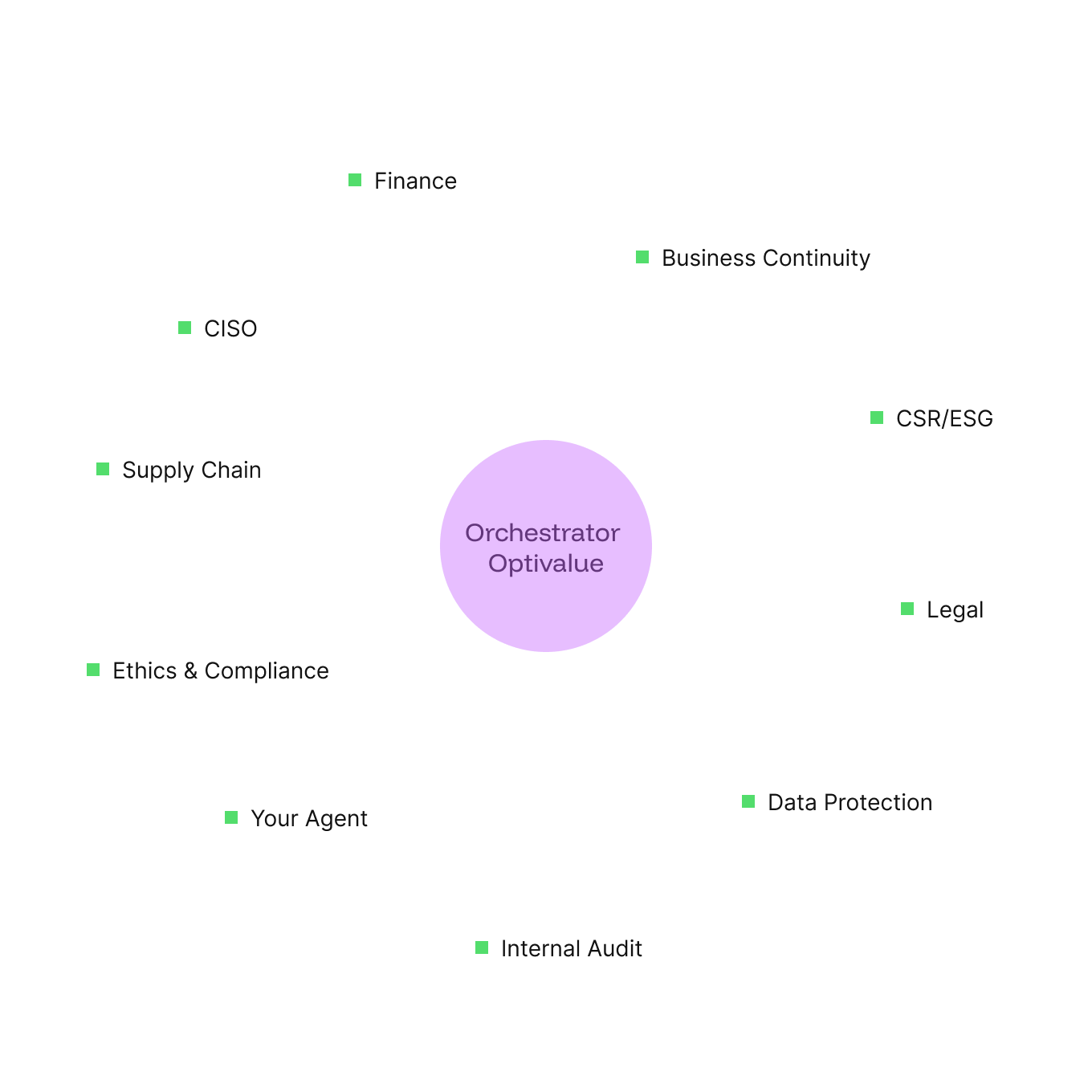

A classic LLM + RAG architecture calls for a single generalist model for each question. Optivalue.ai deploys DSLM (Domain-Specific Language Models) — 89 specialized agents per field, formatted into modular Skills with dedicated instructions, templates and rules.

The Orchestrator analyzes the domain of each question and launches the relevant agents in parallel (Swarm pattern). A multi-domain questionnaire simultaneously activates Finance, HR, HR, GDPR, and IT agents each in its own isolated context, up to 100 sub-agents executed in parallel.

4 orchestration modes adapted to each situation: speed, precision, cost or complete autonomy.

Step 3

Anti-hallucination

7 layers of protection Zero hallucinations.

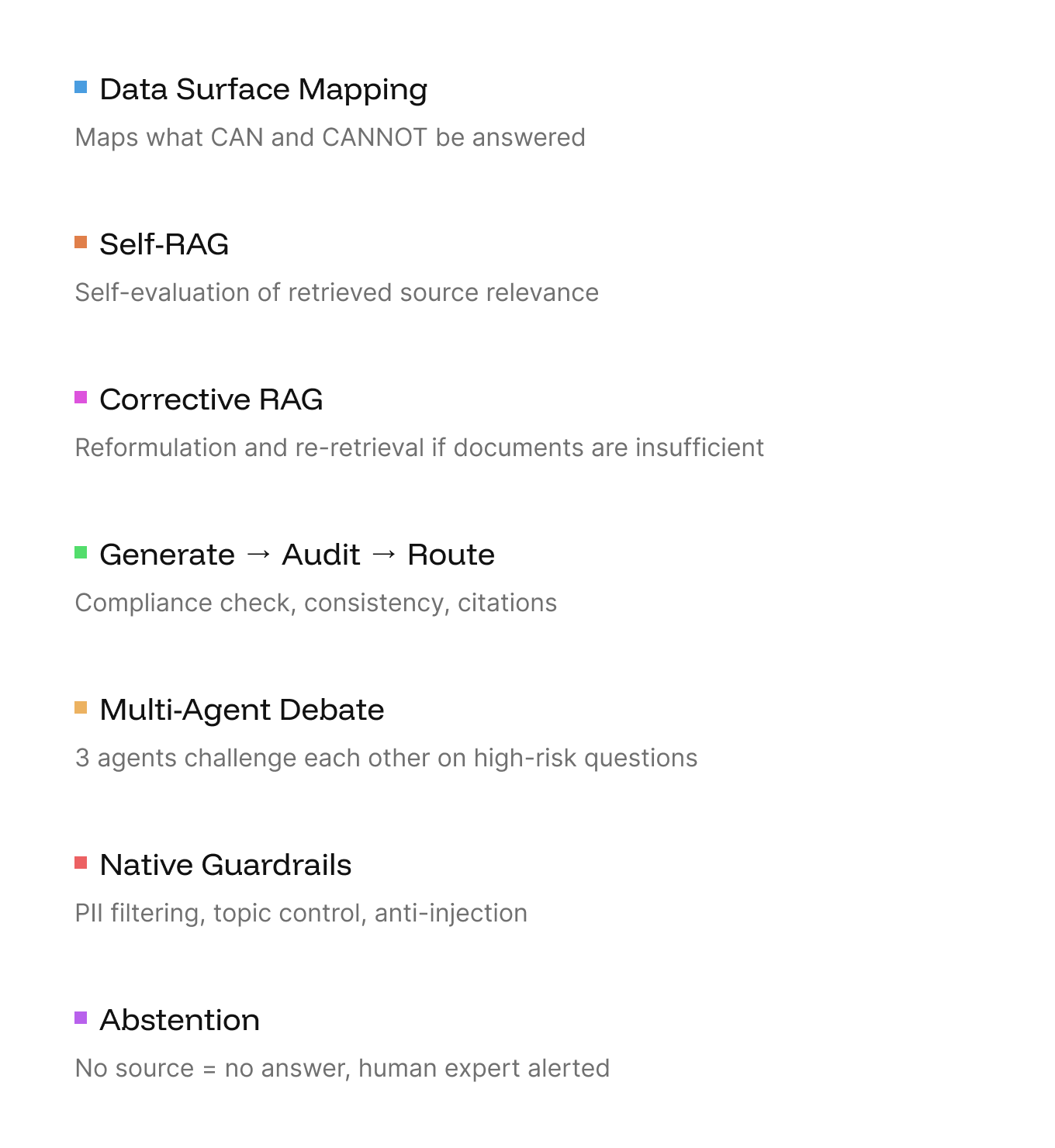

It is the key differentiator. An LLM with RAG retrieves documents and generates a response. Optivalue.ai gets every response through 7 independent verification layers before it reaches the user.

The ultimate line of defense:forbearance. When the system has no source, it doesn't respond. He points out the gap, identifies the right expert, and transfers the question to him. The only player in the market whose AI can say “I don't know.”

For high-risk questions, a Dialectical debate pit three agents (Commercial, Critical, Legal) against each other before producing the final answer. The result: a nuanced, qualified and defensible response.

Step 4

Shredder Agent

Before answering, understand the true intent of the question.

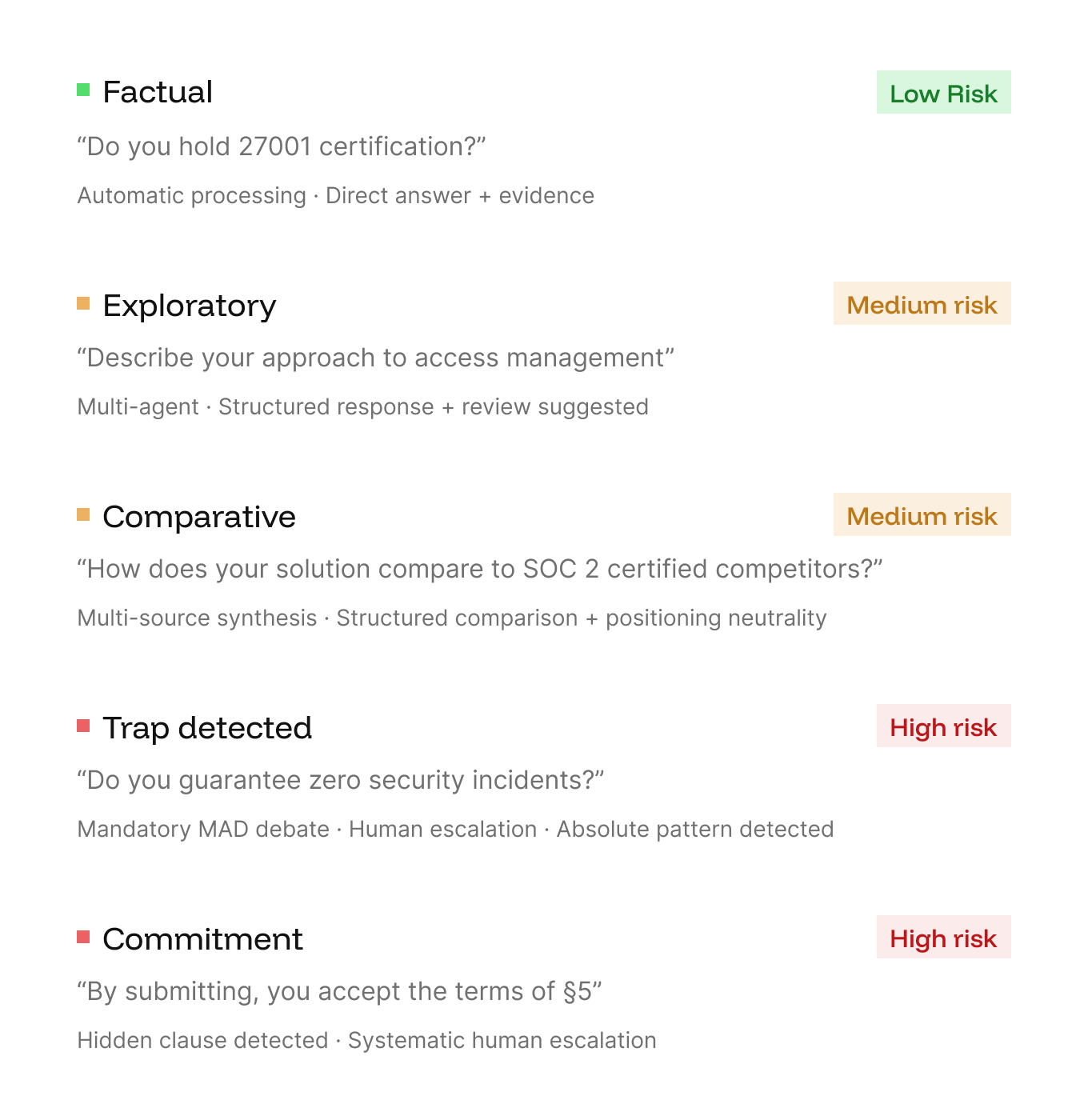

A questionnaire of 200 questions contains 5 radically different types: factual, exploratory, comparative, pitfalls and contractual commitments. An LLM with RAG treats them all the same.

The Shredder Agent analyze each question before any generation. It classifies intent, detects trick questions (absolutes, double negations, implicit commitments), and assigns a risk level that determines the entire downstream workflow.

A question like “Do you guarantee a 100% uptime? does not trigger the same pipeline as “Do you have ISO 27001 certification?” ”

Step 5

Explainable trust score

Each answer noted. Each score explained. Complies with the EU AI Act.

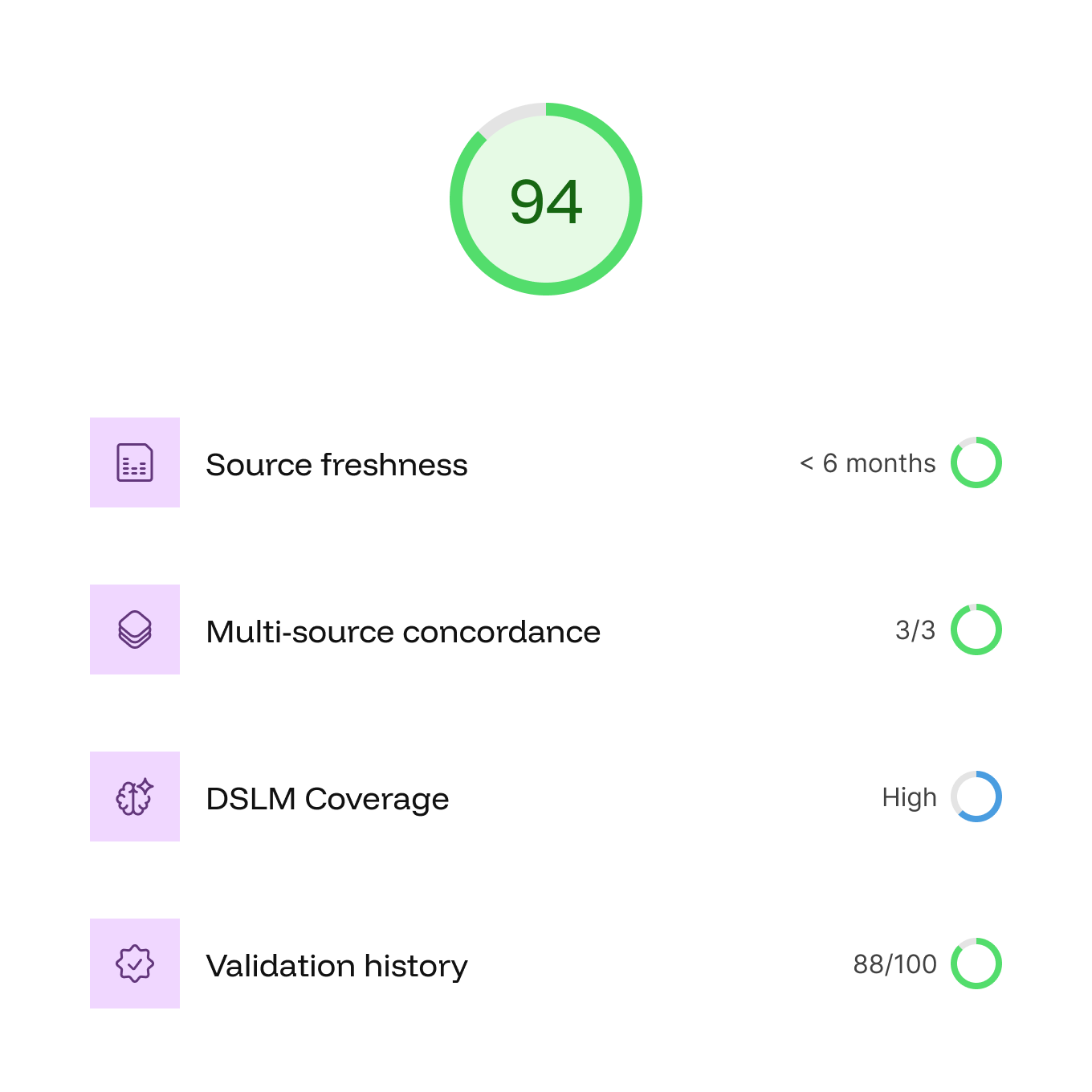

Each answer shows a decomposed multidimensional confidence score not an opaque percentage, but 4 dimensions explained, each clickable.

The EU AI Act (August 2026) mandates transparency and explainability. Optivalue.ai is already compliant: every dimension is traceable, every source verifiable.

The Trust Score per agent × customer Dynamically calibrate autonomy: the more the AI demonstrates its reliability, the more autonomy it gains. 4 levels: Full Auto, Co-Pilot, Assisted, Controlled.